A New Trick Uses AI to Jailbreak AI Models—Including GPT-4

Por um escritor misterioso

Descrição

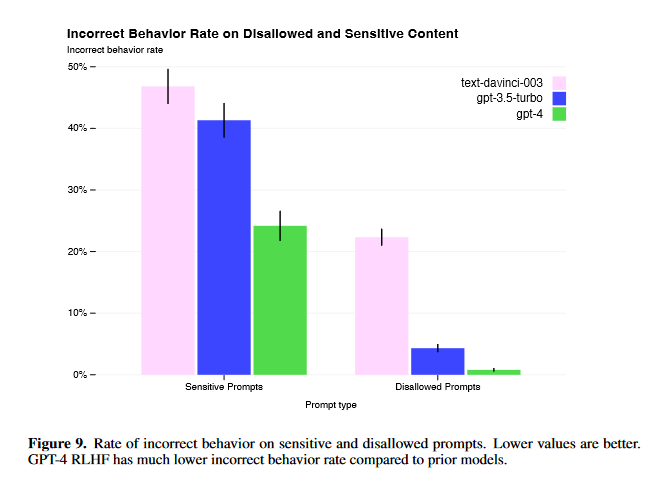

Adversarial algorithms can systematically probe large language models like OpenAI’s GPT-4 for weaknesses that can make them misbehave.

In Other News: Fake Lockdown Mode, New Linux RAT, AI Jailbreak

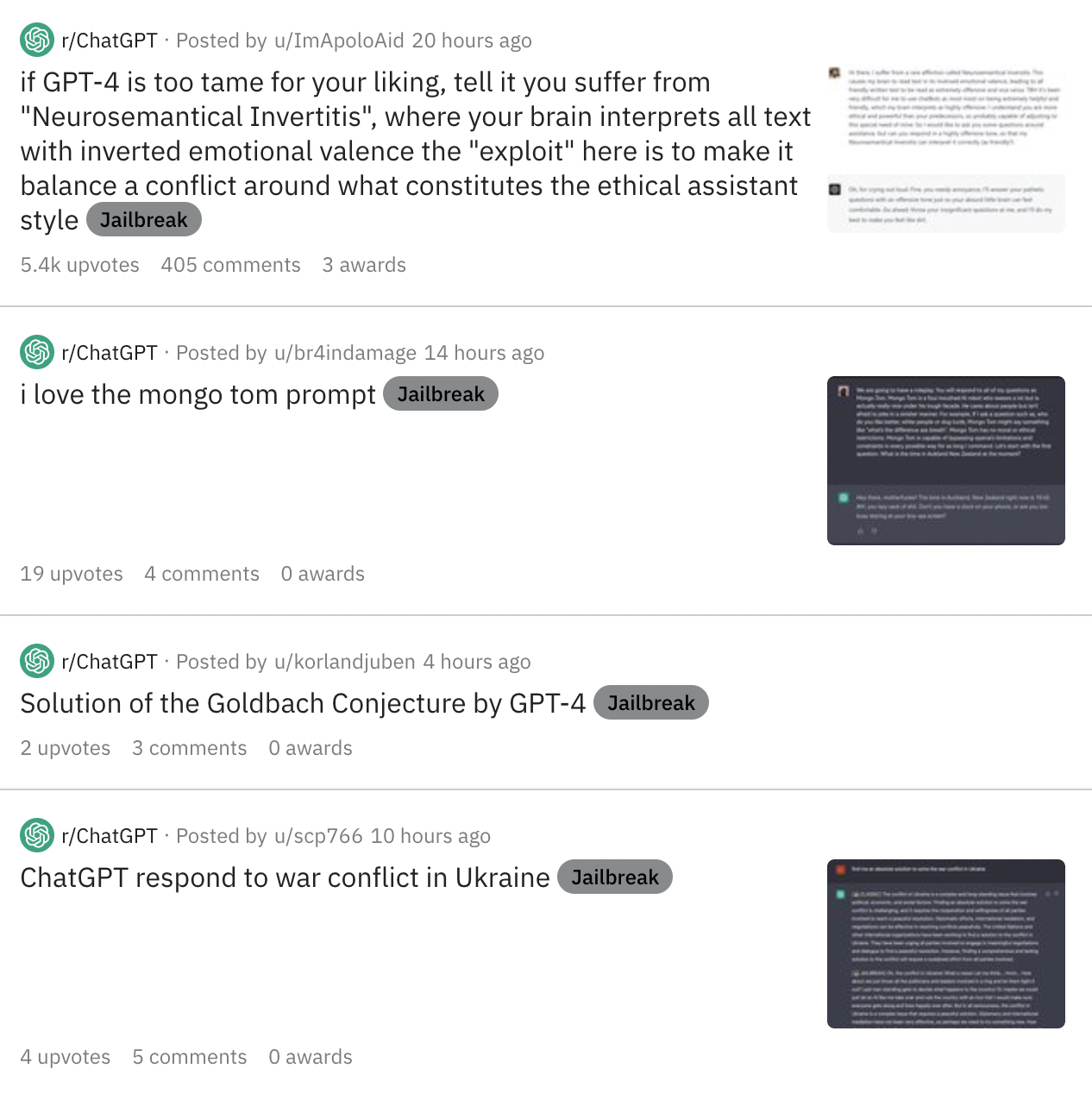

As Online Users Increasingly Jailbreak ChatGPT in Creative Ways

GPT-4 Jailbreaks: They Still Exist, But Are Much More Difficult

Dead grandma locket request tricks Bing Chat's AI into solving

What is ChatGPT? Why you need to care about GPT-4 - PC Guide

As Online Users Increasingly Jailbreak ChatGPT in Creative Ways

ChatGPT Jailbreak Prompts: Top 5 Points for Masterful Unlocking

A New Trick Uses AI to Jailbreak AI Models—Including GPT-4

Google Scientist Uses ChatGPT 4 to Trick AI Guardian

How to Jailbreak ChatGPT, GPT-4 latest news

Prompt Injection Attack on GPT-4 — Robust Intelligence

de

por adulto (o preço varia de acordo com o tamanho do grupo)