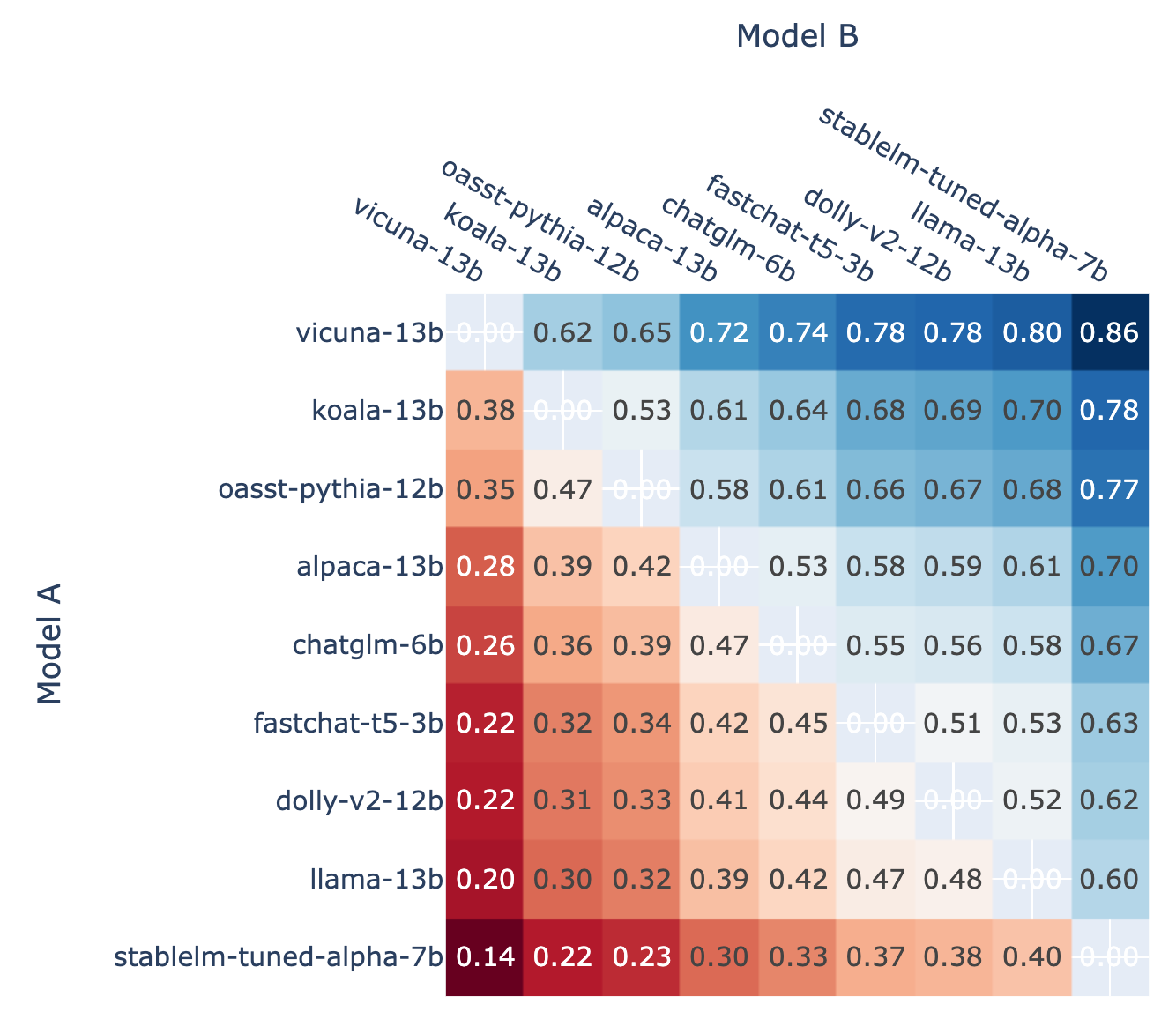

Chatbot Arena: Benchmarking LLMs in the Wild with Elo Ratings

Por um escritor misterioso

Descrição

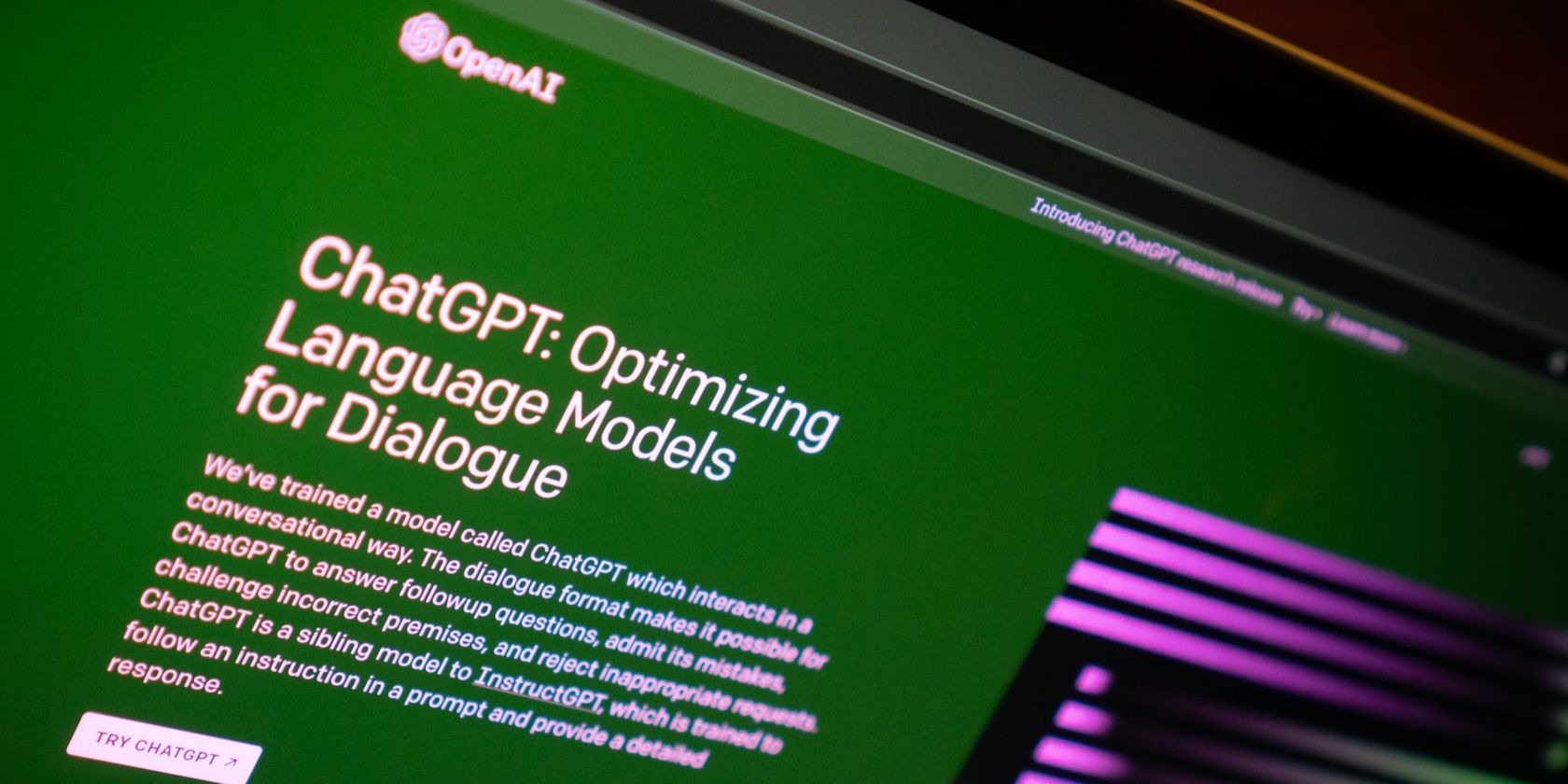

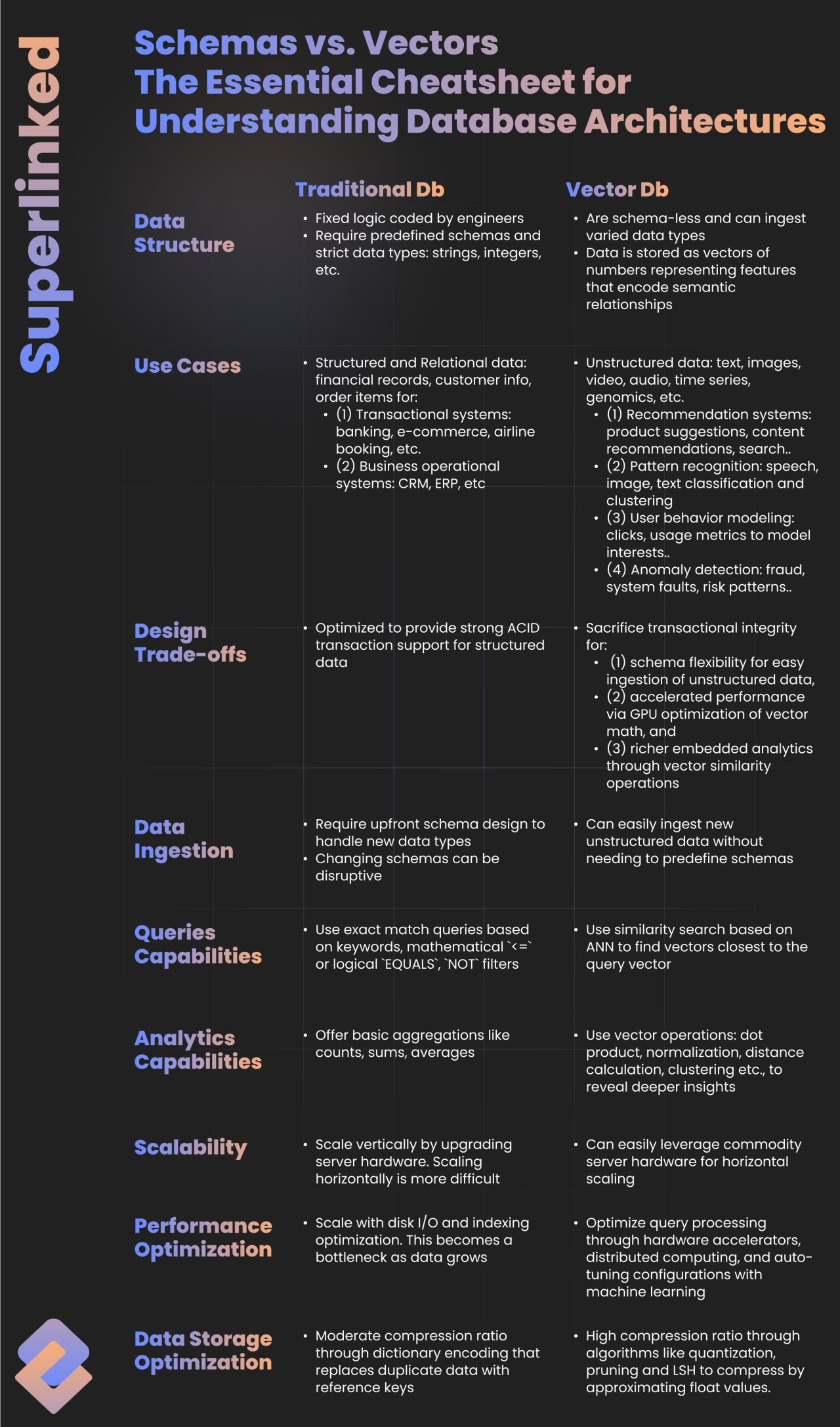

lt;p>We present Chatbot Arena, a benchmark platform for large language models (LLMs) that features anonymous, randomized battles in a crowdsourced manner. In t

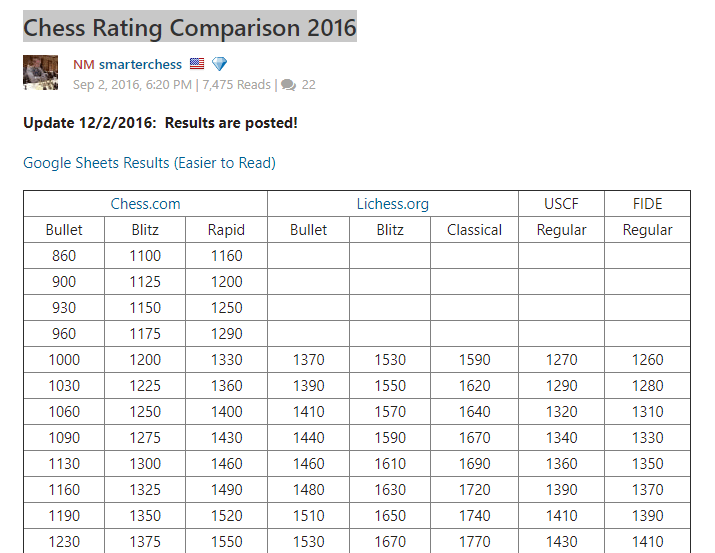

Will any LLM score above 1200 Elo on the Chatbot Arena Leaderboard in 2023?

WizardLM on X: 🎉The @lmsysorg just updated the latest Chatbot Arena and MT-Bentch! Our WizardLM-13B V1.2 model becomes the 🏆 SOTA 13B on both leaderboards with: 🥇 1046 Arena Elo rating 🥇

Around the Block podcast with Launchnodes: 101 on Solo Staking : r/ethereum

Sachin Kumar on LinkedIn: #llms #generativeai

LLM Benchmarking: How to Evaluate Language Model Performance, by Luv Bansal, MLearning.ai, Nov, 2023

How to Use Chatbot Arena to Compare the Best LLMs

Vinija's Notes • Primers • Overview of Large Language Models

Knowledge Zone AI and LLM Benchmarks

Chatbot Arena: Benchmarking LLMs in the Wild with Elo Ratings

de

por adulto (o preço varia de acordo com o tamanho do grupo)