8 Advanced parallelization - Deep Learning with JAX

Por um escritor misterioso

Descrição

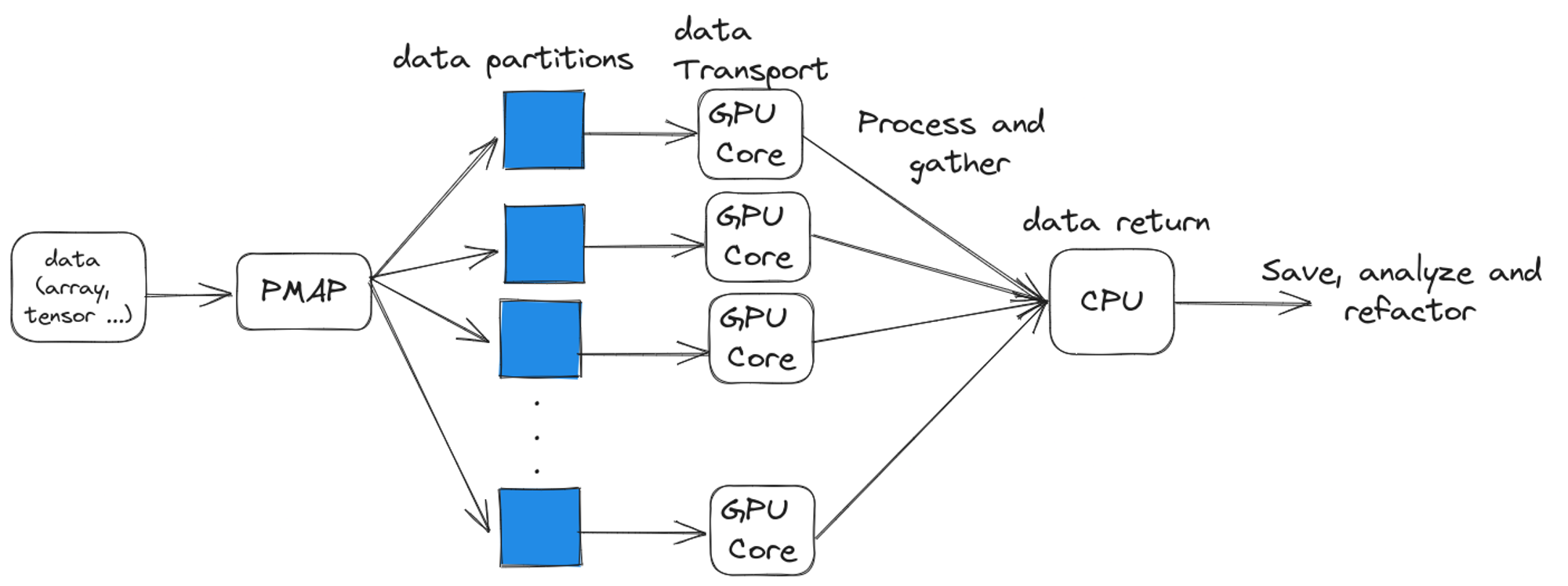

Using easy-to-revise parallelism with xmap() · Compiling and automatically partitioning functions with pjit() · Using tensor sharding to achieve parallelization with XLA · Running code in multi-host configurations

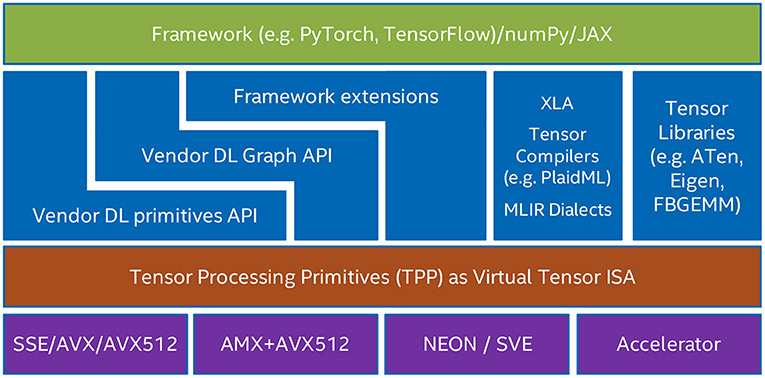

Frontiers Tensor Processing Primitives: A Programming

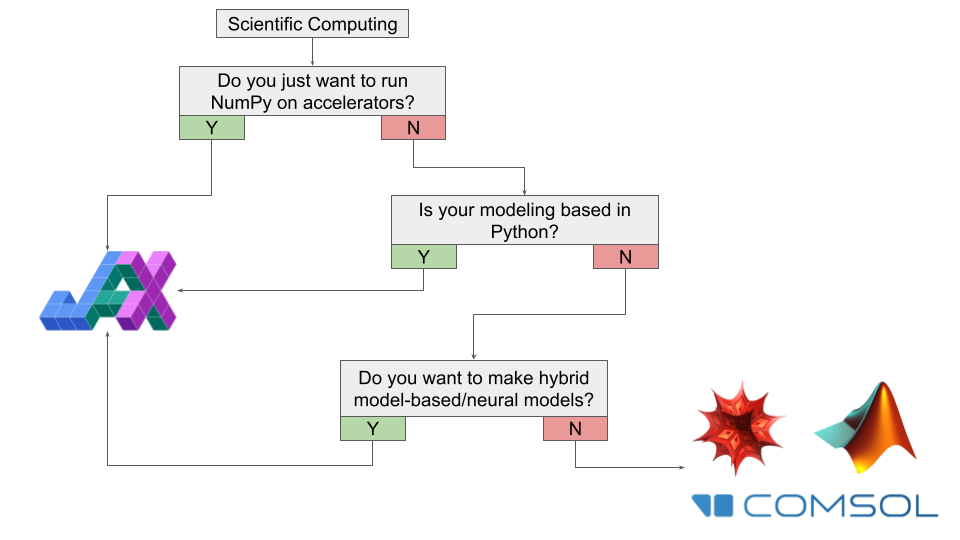

8 Advanced parallelization - Deep Learning with JAX

Breaking Up with NumPy: Why JAX is Your New Favorite Tool

What is Google JAX? Everything You Need to Know - Geekflare

Tutorial 2 (JAX): Introduction to JAX+Flax — UvA DL Notebooks v1.2

Why You Should (or Shouldn't) be Using Google's JAX in 2023

Compiler Technologies in Deep Learning Co-Design: A Survey

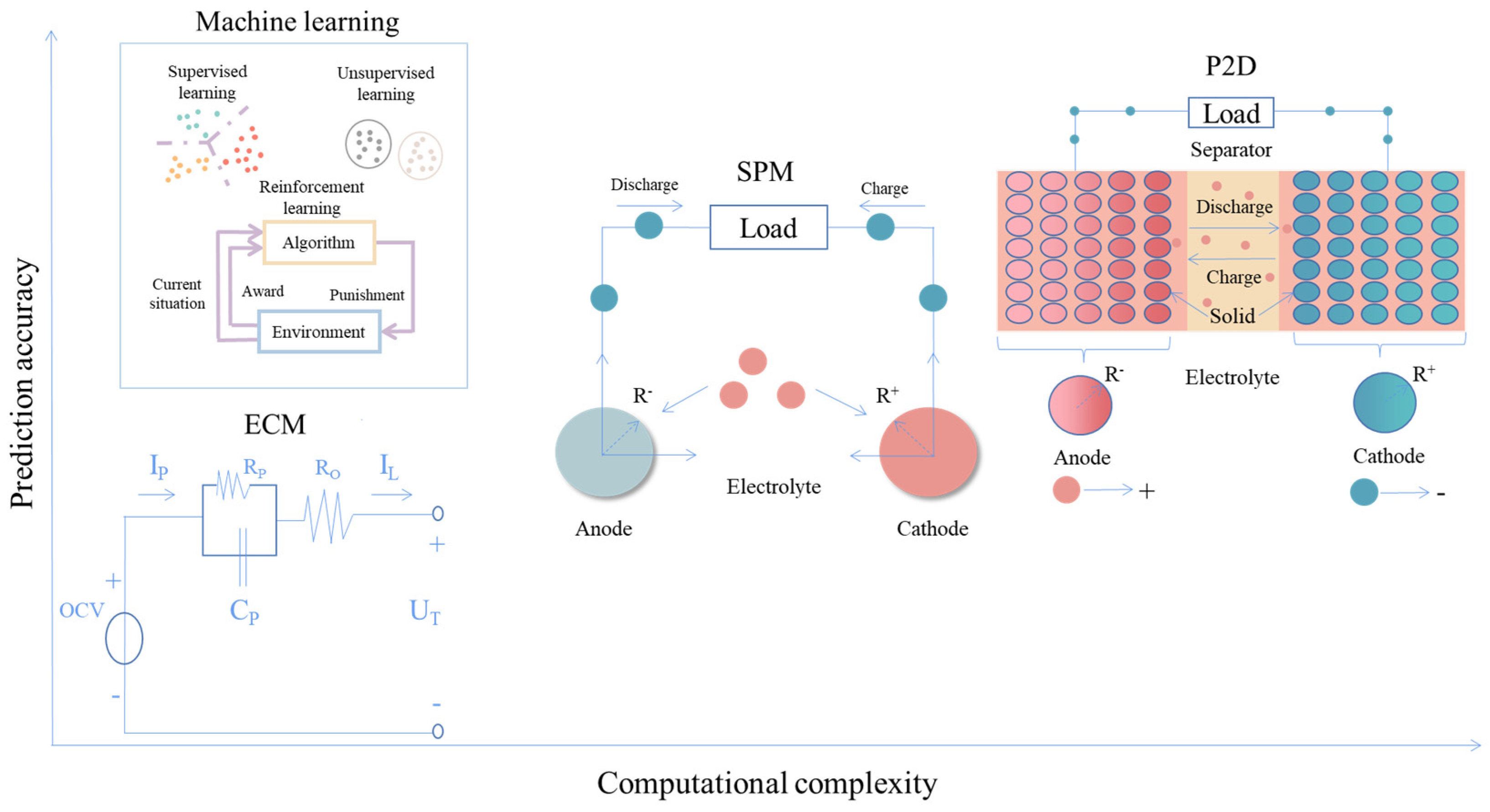

Energies, Free Full-Text

Learning JAX in 2023: Part 1 — The Ultimate Guide to Accelerating

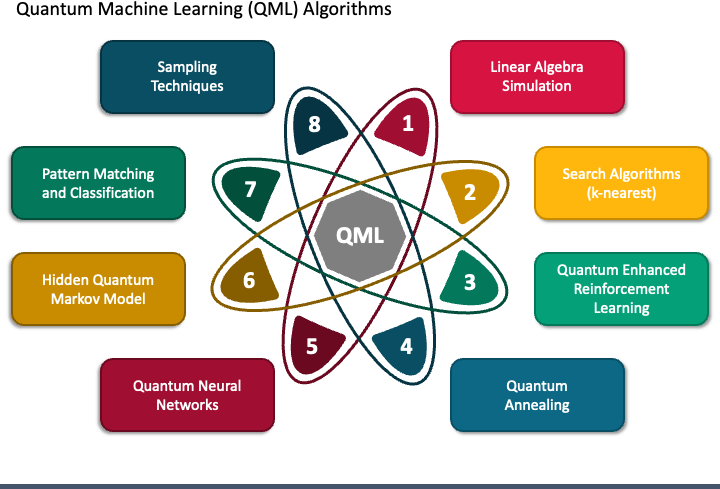

Exploring Quantum Machine Learning: Where Quantum Computing Meets

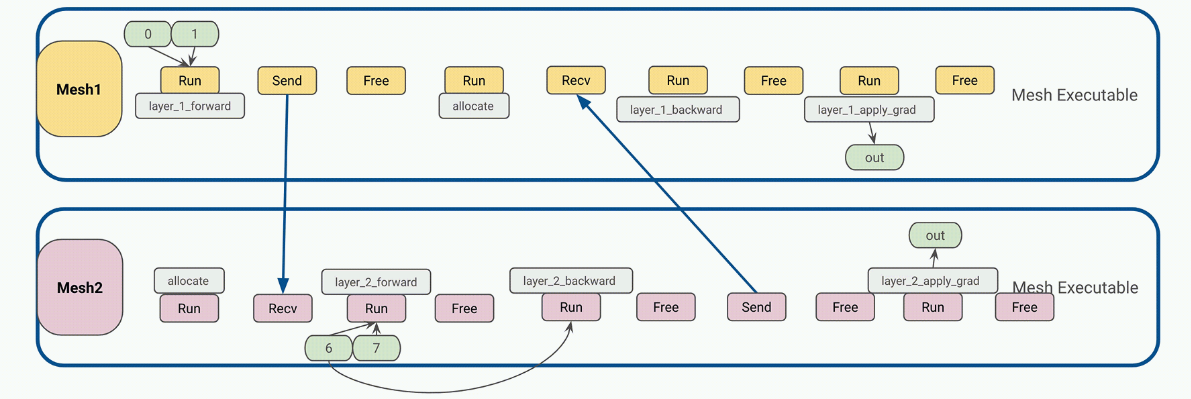

Efficiently Scale LLM Training Across a Large GPU Cluster with

How to train a deep learning model in the cloud

Why You Should (or Shouldn't) be Using Google's JAX in 2023

Breaking Up with NumPy: Why JAX is Your New Favorite Tool

de

por adulto (o preço varia de acordo com o tamanho do grupo)