PDF) Incorporating representation learning and multihead attention

Por um escritor misterioso

Descrição

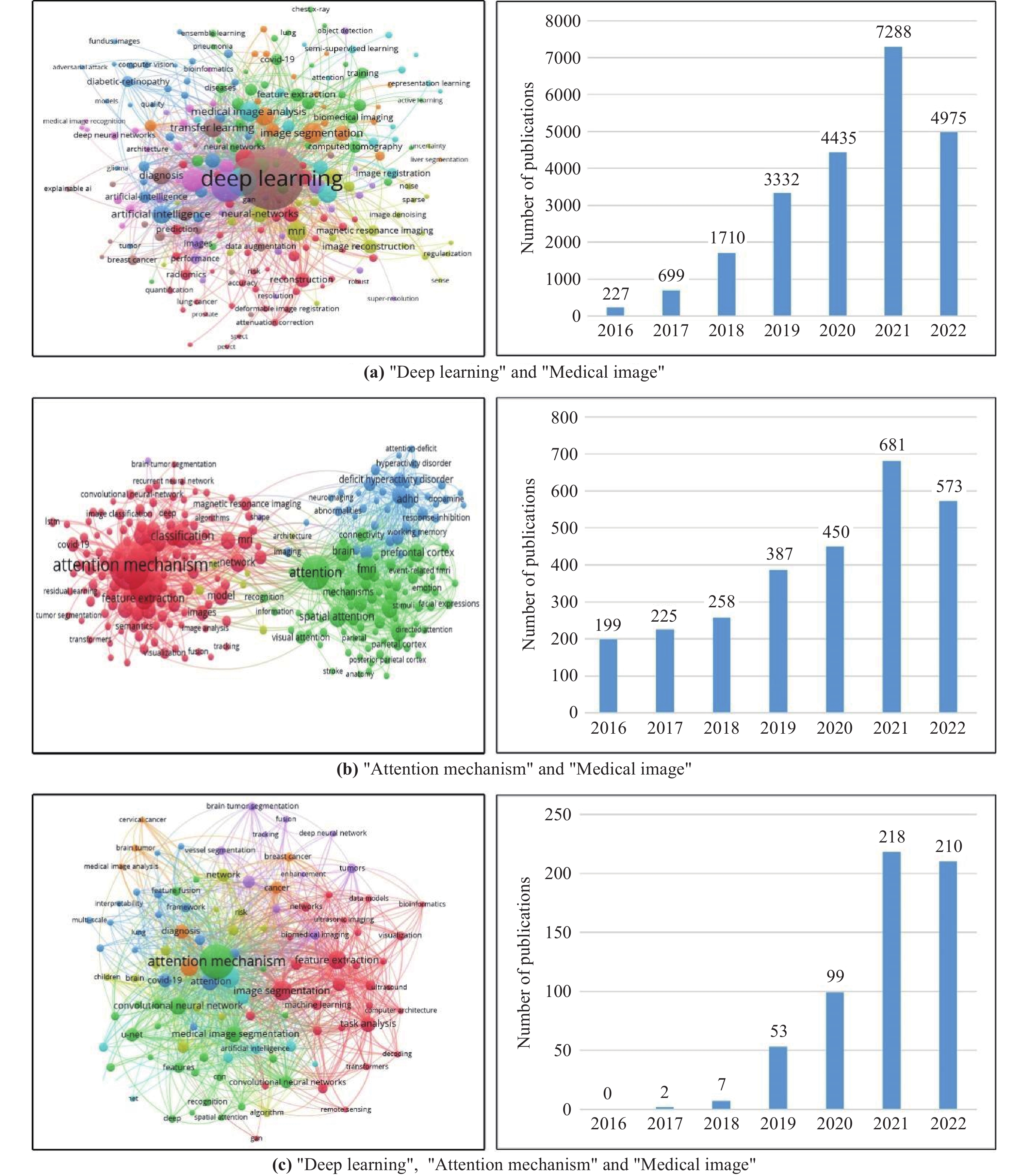

Deep Learning Attention Mechanism in Medical Image Analysis: Basics and Beyonds-Scilight

Transformer (machine learning model) - Wikipedia

Biomedical cross-sentence relation extraction via multihead attention and graph convolutional networks - ScienceDirect

PDF) Contextual Attention Network: Transformer Meets U-Net

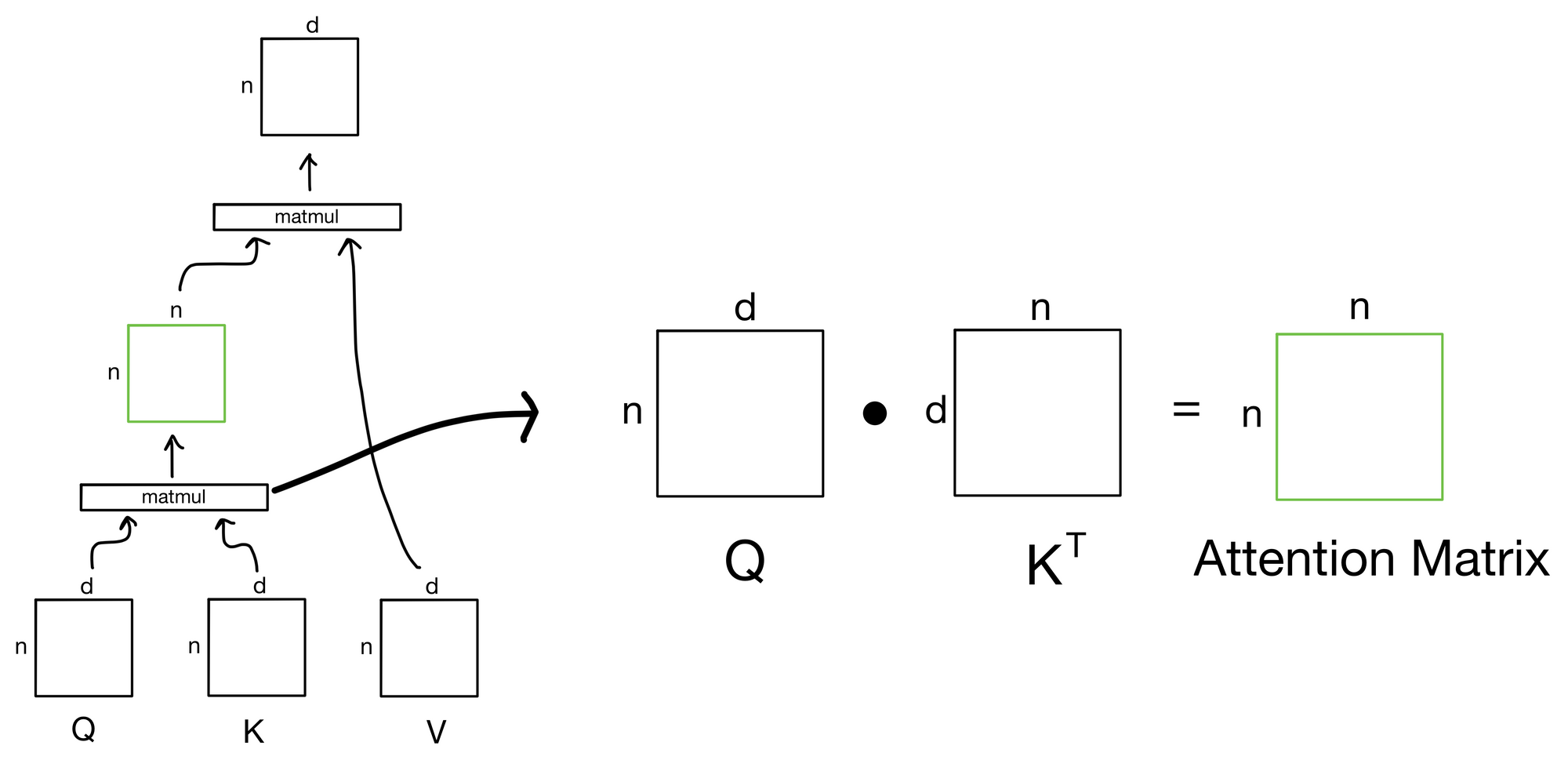

How to Implement Multi-Head Attention from Scratch in TensorFlow and Keras

PDF] Dependency-Based Self-Attention for Transformer NMT

Incorporating representation learning and multihead attention to improve biomedical cross-sentence n-ary relation extraction, BMC Bioinformatics

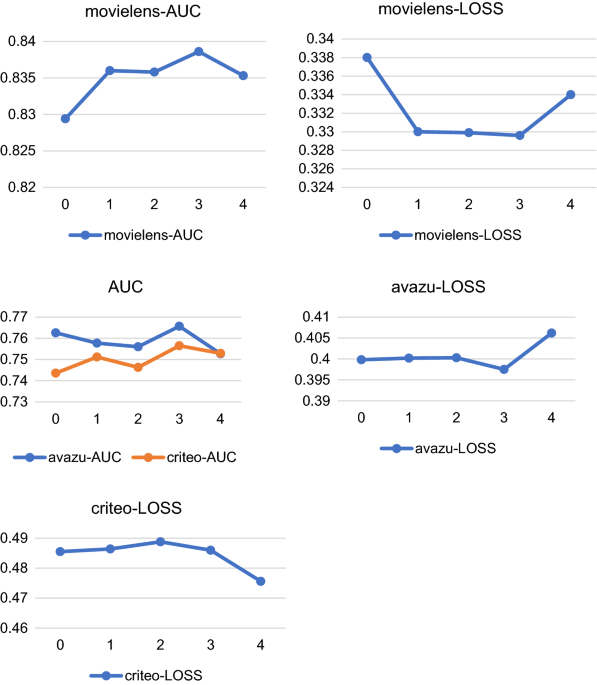

Click-through rate prediction model integrating user interest and multi-head attention mechanism, Journal of Big Data

Explained: Multi-head Attention (Part 1)

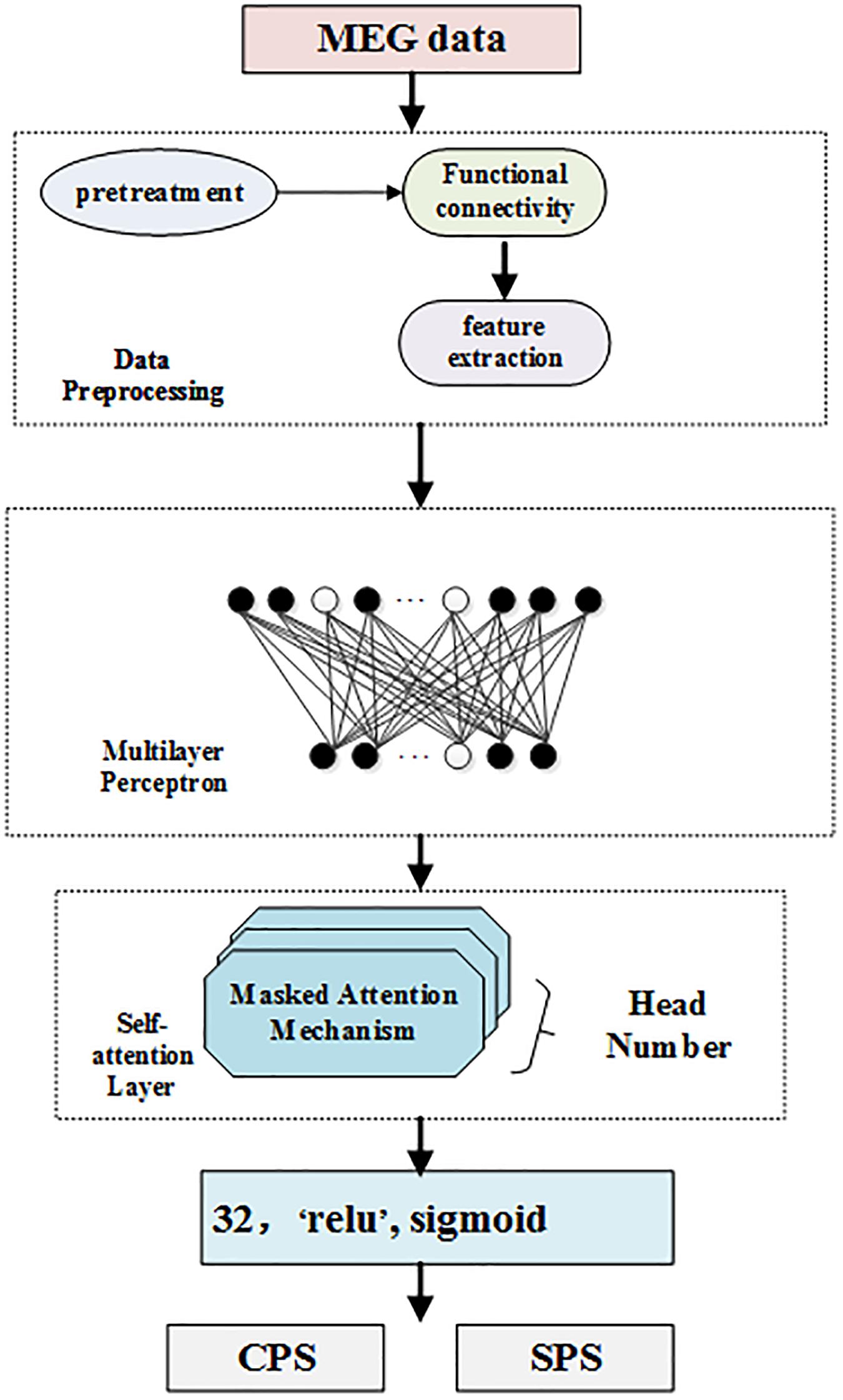

Frontiers Multi-Head Self-Attention Model for Classification of Temporal Lobe Epilepsy Subtypes

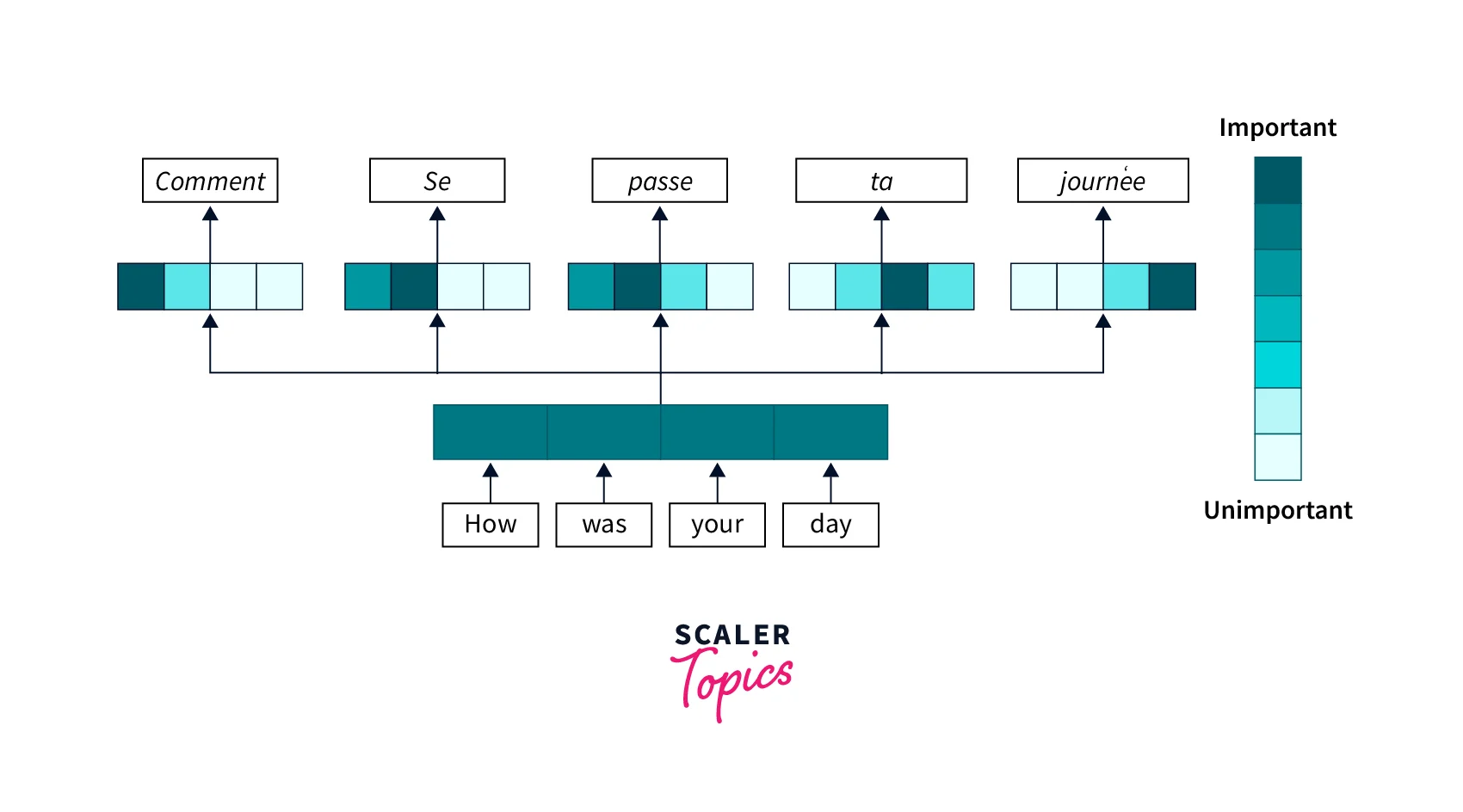

Attention Mechanism in Deep Learning- Scaler Topics

Integrated Multi-Head Self-Attention Transformer model for electricity demand prediction incorporating local climate variables - ScienceDirect

Transformer (machine learning model) - Wikipedia

de

por adulto (o preço varia de acordo com o tamanho do grupo)